Hermes Agent Is Taking Off: A Plain-English Setup Guide

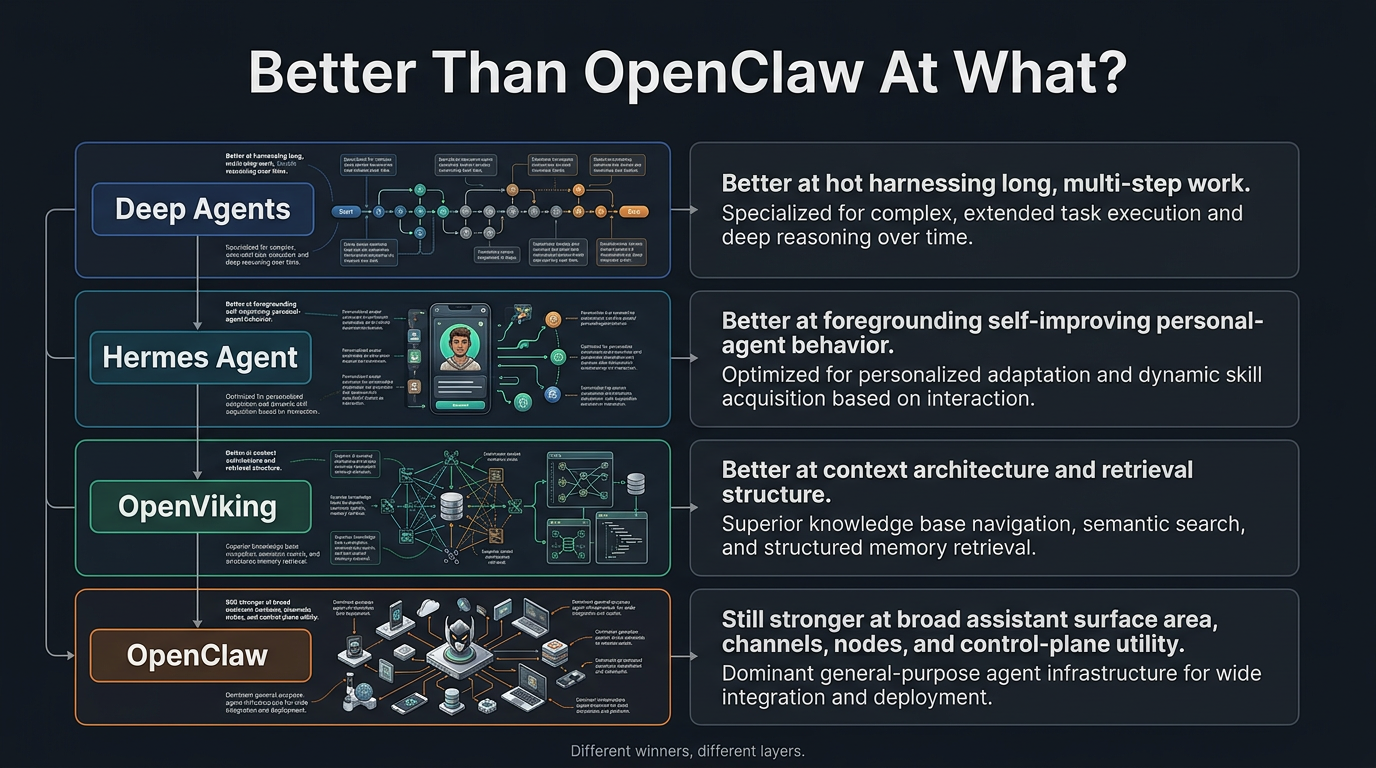

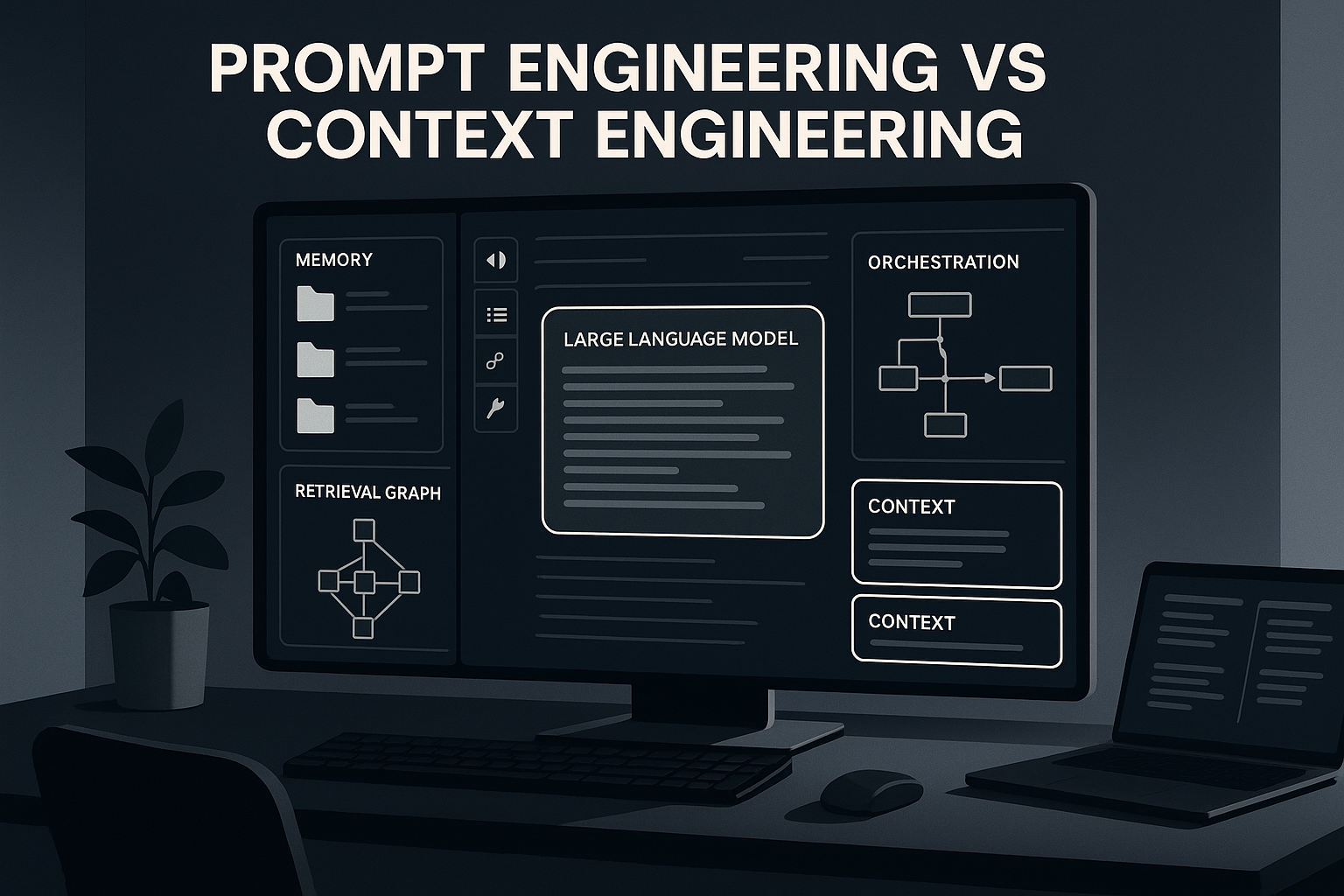

Hermes Agent is gaining attention because it remembers, runs from messaging apps, uses tools, creates reusable skills, and can be self-hosted. This guide explains the rise in plain English and how to approach setup safely.