If you asked this question a year ago, the honest answer was still a little annoying.

Yes, there were open-source TTS models. Yes, there were hobbyist voice-cloning projects. Yes, you could cobble together something local.

But if what you actually wanted was something that felt like a real alternative to ElevenLabs, the list got thin fast.

That has changed.

Open-source TTS in 2026 is not one project. It is a whole stack of competing approaches, and some of them are now legitimately good enough to matter for real products, local workflows, and privacy-sensitive deployments.

The catch is that people keep mashing together 3 different things:

- plain text-to-speech

- zero-shot voice cloning

- commercially usable open models

Those are not the same category.

A model can sound great and still be useless for a business because of its license. A model can be open-weight and still hide the best cloning features behind a hosted product. A model can be incredibly fast and still not actually clone voices well.

So the better question is not “what is the open-source ElevenLabs clone?”

It is this:

Which open TTS systems are actually good, which ones really clone voices, and which ones are safe to use in production?

The short version

If you want the practical answer fast:

- Best commercially usable open replacement: Qwen3-TTS

- Best quality-first open release with license caveats: Fish Audio S2 Pro

- Best modern developer stack: Chatterbox

- Best multilingual-heavy option: CosyVoice 3.0

- Best old reliable cloning workhorse: XTTS-v2

- Best tinkerer stack: GPT-SoVITS

- Best tiny local runtime: Kokoro

- Most interesting wildcard: Mistral Voxtral TTS

The important part is not picking one winner.

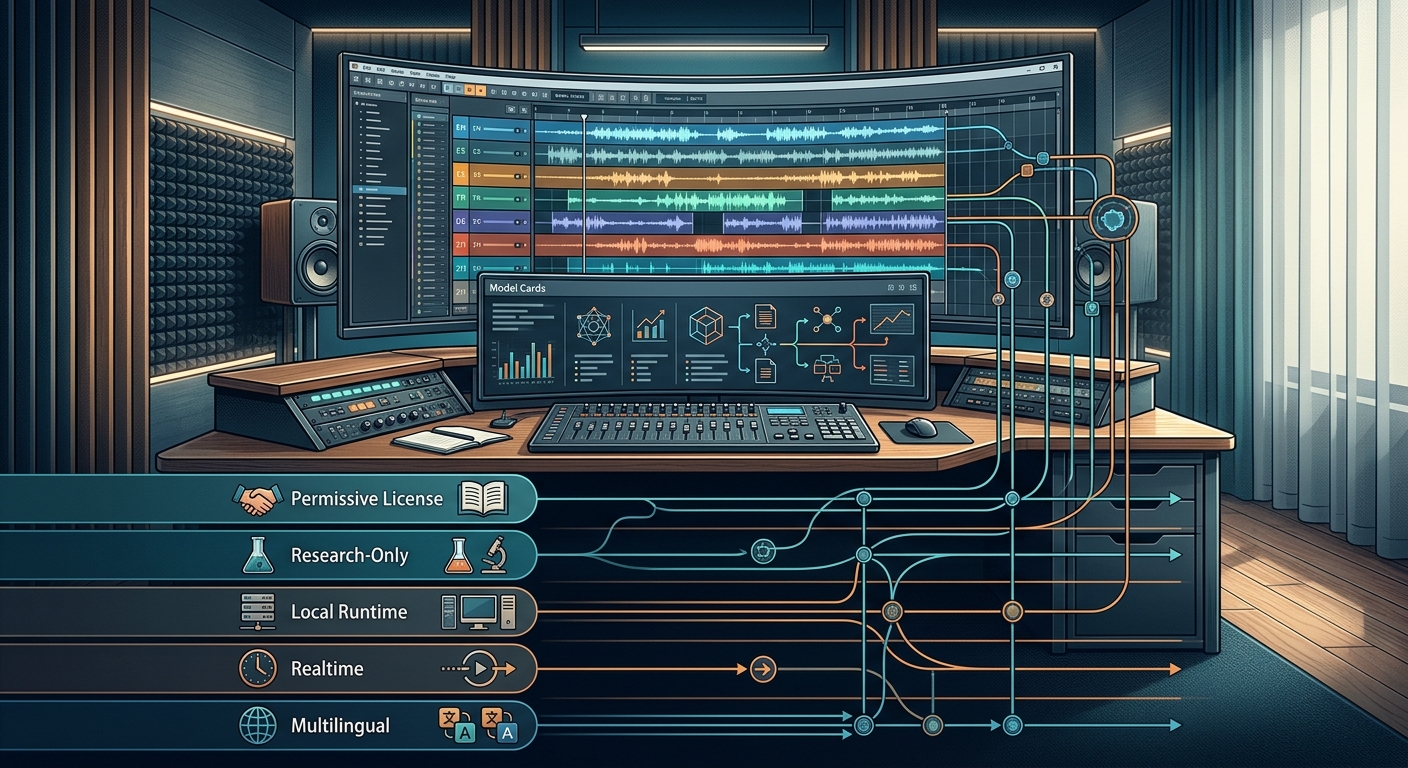

The important part is separating permissive commercial stacks, research-grade releases, local tiny runtimes, and genuine cloning systems from plain TTS.

First, separate TTS from voice cloning

A lot of people say “voice cloning” when they really just mean “good TTS.”

That blurs together 2 very different problems.

Plain TTS means the model reads text in one of its built-in or designed voices.

Voice cloning means you give it a short reference clip, usually somewhere between 3 and 10 seconds, and it tries to preserve that speaker’s timbre, cadence, accent, and sometimes emotional profile.

That distinction matters because some models are great at one and mediocre at the other.

It also matters because a lot of smaller models are correctly impressive, but impressive in the wrong category.

KittenTTS is a good example. It is genuinely exciting as a tiny local TTS model. That does not automatically make it a strong ElevenLabs substitute if your actual requirement is cloning a specific voice for narration, support agents, or multilingual dubbing.

Second, separate open-source from open-weight from commercially usable

This is where a lot of the “free ElevenLabs alternative” hype gets sloppy.

Some projects are permissive enough to use commercially without much drama.

Some are open-weight, but non-commercial.

Some are under research licenses.

Some expose their best custom-voice or cloning UX in the hosted product while the public release is a narrower slice.

That means there are really 3 filters you have to apply:

- Does it sound good?

- Does it actually clone voices well?

- Can you legally and operationally use it for your use case?

A surprising number of promising projects fail on filter 3.

The strongest open alternatives right now

1. Qwen3-TTS

If I had to pick one model family that best balances capability, flexibility, and commercial friendliness right now, it would probably be Qwen3-TTS.

Qwen’s release is serious.

It supports voice design, custom voice control, streaming, and rapid voice cloning from short reference audio. The official release covers 10 languages and makes a strong pitch around low-latency speech generation, natural-language voice control, and real-time deployment.

That already sounds good.

The part that matters even more is the licensing. Qwen3-TTS is released under Apache 2.0, which immediately makes it more interesting than a bunch of flashier releases that look better on demos but become awkward the minute you try to use them in a real commercial workflow.

That does not mean Qwen3-TTS is perfect.

Some practitioner discussion suggests it can sound a little flatter than the most expressive frontier models unless you tune it carefully. That tracks with a lot of modern open audio systems: the raw capability is there, but getting the last 10 percent of naturalness still takes judgment.

Still, if your question is “what can I actually ship without turning the legal review into a side quest?” Qwen3-TTS is one of the best answers on the board.

2. Fish Audio S2 Pro

On raw ambition, Fish Audio S2 Pro is one of the most impressive models in the category.

The official release claims 80+ language support, strong zero-shot cloning from short references, multi-speaker handling, streaming inference, and unusually rich prosody control through inline natural-language tags like [whisper], [laughing], or much more specific style instructions.

That is a big deal.

A lot of open TTS systems still feel like you are negotiating with the model. Fish is trying to make speech control feel more like language control.

The architecture and serving story are also clearly aimed at serious usage rather than toy demos. Their docs lean into batching, cache efficiency, streaming, and serving on modern inference stacks. That is the behavior of a team trying to win infrastructure, not just attention.

There is just one giant catch: the model is under a research license.

That does not make it useless. It does make it very different from a clean commercial ElevenLabs replacement.

So the honest framing is this:

Fish S2 Pro is one of the best open releases for people who care about quality, expressiveness, and multilingual reach. It is not the easiest answer if you need a safe, boring, production license.

3. Chatterbox

Chatterbox, from Resemble AI, is one of the more interesting releases because it seems to understand what developers actually want.

Not just quality.

Usability.

The model family includes a lower-latency Turbo variant, a multilingual variant covering 23+ languages, and support for voice cloning from reference audio. It also includes paralinguistic tags like [laugh], [cough], and [chuckle], which matter more than they sound like they should. Synthetic speech often fails not because the words are wrong, but because the delivery is too sterile.

Chatterbox is trying to fix that.

It also has a clearer product-minded shape than a lot of academic or hobbyist releases. There is a direct path from “I want to try this” to “I can actually integrate this.”

That makes it one of the best candidates for people who want an open system that does not feel like an archeology project.

One thing worth watching closely is the exact licensing and packaging across versions. The project is very promising, but whenever a model family straddles open releases and commercial services, it is worth being precise about what is open, what is benchmarked, and what assumptions those comparisons depend on.

4. CosyVoice 3.0

A lot of TTS evaluation still overweights English demos.

That is understandable, but misleading.

If what you actually need is multilingual speech synthesis, cross-lingual cloning, dialect handling, and controllability around pronunciation, CosyVoice 3.0 deserves much more attention than it usually gets in casual English-language discussions.

The official project leans hard into exactly those strengths:

- zero-shot multilingual cloning

- cross-lingual support

- 9 major languages

- a long list of Chinese dialects and accents

- streaming support

- controllable pronunciation via Pinyin and CMU phoneme inpainting

That last part matters a lot in production. One of the fastest ways to hate a TTS system is to listen to it misread names, numbers, abbreviations, or mixed-language text over and over.

CosyVoice is not the smallest or simplest option here. But if your real goal is multilingual deployment rather than just English narration, it has a strong case.

5. OmniVoice

If your evaluation lens is language coverage first, OmniVoice immediately jumps out.

The project claims 600+ languages, zero-shot cloning, voice design, non-verbal control like [laughter], pronunciation guidance, and very fast inference. That is not a small extension of the current open TTS playbook. That is a direct attempt to widen the category.

That makes OmniVoice interesting for 2 reasons.

First, it is aimed at a genuinely hard problem. A lot of open TTS discussion quietly collapses into English, maybe with a few major European and Asian languages bolted on. OmniVoice is trying to make omnilingual speech synthesis a first-class feature rather than a nice extra.

Second, the feature set is pointed in the right direction for real products: cloning, design, controllability, and speed all in one place. If those claims survive wider practitioner testing, this could become one of the most important multilingual open releases in the space.

The catch is that breadth claims need verification more than demo-page admiration. A model can technically support hundreds of languages and still have very uneven quality across the long tail.

So the right posture is interest, not instant coronation.

Still, OmniVoice absolutely belongs in the serious-shortlist conversation now.

6. VoxCPM2

VoxCPM2 feels notable for a different reason.

It is not just pitching quality. It is pitching a full modern product shape: Apache-2.0, 30 languages, tokenizer-free generation, controllable voice cloning, voice design, 48kHz output, and streaming-oriented performance claims.

That combination matters.

A lot of audio releases make one strong impression, then fall apart when you ask the practical questions. Can I use it commercially? Does it stream? Is cloning actually part of the public release? Is the output quality high enough for polished narration or voice agents?

VoxCPM2 is interesting because it seems to be trying to answer all of those questions at once.

The tokenizer-free architecture is also worth paying attention to. Whether it ends up being the long-term winning design or not, it is part of a broader pattern: open TTS systems are getting less toy-like and more end-to-end.

If I were building a serious shortlist for current open voice stacks, VoxCPM2 would be on it.

7. Mistral Voxtral TTS

Mistral’s Voxtral TTS is one of the most interesting new entries because the quality bar appears high enough that people immediately started comparing it to ElevenLabs rather than to other open demos.

That is a compliment.

The official docs position it as a low-latency TTS model with zero-shot cloning from short prompts, multilingual support, and suitability for voice agents. The Hugging Face release page frames it as a frontier open-weights model with strong real-time characteristics.

So why not just call it the winner?

Because the practical story is messier.

First, the open-weight model inherits a CC BY-NC 4.0 license from the included reference voices. That is already a major limitation for commercial use.

Second, community discussion, especially in LocalLLaMA, quickly zeroed in on whether the open release really matches the cloning/custom-voice story implied by the product messaging. Several practitioners came away believing the hosted stack exposes more of the interesting custom-voice functionality than the public release does.

That does not make Voxtral unimportant.

It means you should treat it as a serious high-quality open-weight TTS release with real caveats, not as a universal ElevenLabs replacement.

If Mistral eventually widens the licensing and makes the local feature story cleaner, it could become one of the strongest entries in the whole category.

Right now, it is more complicated than the headline suggests.

The workhorses still matter

XTTS-v2

Open-source categories do not reset every time a newer model drops.

XTTS-v2 is still relevant because it is widely known, multilingual, clone-capable, and deeply embedded in the workflows of people who have been doing local speech generation for a while.

The official model page still presents a solid proposition:

- 6-second voice cloning

- 17 languages

- cross-language cloning

- support through the broader Coqui TTS stack

It is not the shiny new thing anymore.

But installed base matters. Documentation matters. Familiarity matters.

If you want the audio equivalent of a dependable older framework that lots of people know how to operate, XTTS-v2 is still part of the conversation.

The main downside is the license. Coqui’s public model license is not the same thing as a clean Apache or MIT setup, so you still need to pay attention before assuming it is a drop-in commercial answer.

GPT-SoVITS

If XTTS-v2 is the dependable workhorse, GPT-SoVITS is the enthusiast’s garage.

It remains one of the most popular voice-cloning projects in the open world because it gives people a lot of room to push beyond default inference.

The pitch is attractive:

- zero-shot TTS from around 5 seconds of reference audio

- better similarity with about 1 minute of fine-tuning data

- cross-lingual support

- lots of WebUI and workflow support

That makes it especially compelling for creators and experimenters who care about matching a voice more closely and are willing to do more setup.

It also means the experience is less polished.

GPT-SoVITS is less of a neat product and more of a workbench. For some people, that is the appeal.

For others, that is the warning label.

The tiny local models are real, but don’t oversell them

Kokoro

Kokoro matters because it makes local TTS cheap and boring in the best possible way.

It is only 82M parameters, Apache licensed, cheap to serve, and clearly designed to be embedded in real systems without drama.

That is powerful.

A lot of teams do not need the last drop of dramatic realism. They need speech that is fast, affordable, local, and good enough.

Kokoro fits that shape really well.

Where people get carried away is treating every strong small model as if it automatically belongs in the “kills ElevenLabs” tier.

That is not quite right.

Kokoro is one of the best examples of how far efficient open TTS has come. It is less convincing as the single answer for highest-end voice cloning where similarity and expressiveness are the whole game.

KittenTTS

KittenTTS got attention for a good reason.

A TTS model in the 15M to 80M range, running on CPU and targeting local deployment, is a big deal. That kind of footprint changes what is possible on laptops, edge boxes, and mobile-ish environments.

But it is important not to confuse category excitement with direct task replacement.

The official project emphasizes built-in voices, ONNX runtime, tiny models, and CPU-first deployment. In community discussion, the developers were explicit that zero-shot voice conversion/cloning was not part of this series yet.

So the right way to talk about KittenTTS is not “this replaces ElevenLabs.”

It is “this shows how far tiny local TTS has come, and it points toward a future where a lot more speech workloads move on-device.”

That is still a meaningful story.

It is just a different story.

What people on X and Reddit actually care about

The pattern is pretty simple.

People do not just want a model that sounds good in a demo. They want some combination of:

- a clean commercial license

- local or offline deployment

- low enough latency for voice agents

- multilingual coverage

- a setup that does not feel like surgery

That is why the same few names keep coming up.

On X, the biggest theme is compression. Open TTS is no longer a toy category. Chatterbox, Qwen3-TTS, Fish Speech, and Voxtral all show up in that broader story: the quality gap with paid APIs is narrowing fast.

The second theme is licensing. The minute people try to wire these models into real products, the conversation changes from excitement to legal realism.

That is why Qwen3-TTS gets so much practical respect. It is not just good. It is good and permissive.

On Reddit, the Voxtral thread was even more blunt. People were interested in the quality and the small footprint, then immediately asked the only question that matters in production: what is actually available in the open release, and under what license?

So, can open-source really replace ElevenLabs now?

Yes, with an asterisk.

If all you want is strong local TTS, the answer is already yes.

If you want real short-reference voice cloning with modern quality, the answer is also yes, though the best options still come with tradeoffs.

If you want one neat package that gives you ElevenLabs-level quality, permissive licensing, low latency, multilingual reach, and a polished product experience all at once, that still does not really exist.

What does exist now is a real market.

There are strong options for:

- commercial deployment

- expressive research use

- multilingual synthesis

- local tiny runtime

- fine-tuning and cloning workflows

That is why the category finally feels mature. It stopped being one flashy demo and started looking like infrastructure.

My current picks

If I were sorting the category by actual usefulness today, my rough shortlist would look like this:

Best all-around commercial starting point

- Qwen3-TTS

Best frontier-quality open release if license constraints do not scare you

- Fish Audio S2 Pro

Best modern open stack for developers building voice apps

- Chatterbox

Best multilingual-heavy option

- CosyVoice 3.0

Best established community workhorse

- XTTS-v2

Best power-user cloning lab

- GPT-SoVITS

Best tiny deploy-anywhere TTS option

- Kokoro

Most ambitious multilingual swing

- OmniVoice

Best newer open stack to watch closely

- VoxCPM2

Most interesting new wildcard

- Mistral Voxtral TTS

Listen to a few public examples

These are public demo outputs pulled from official public Spaces or demos so you can get a rough feel for how different systems sound in practice.

They are not a perfect apples-to-apples benchmark. The prompts, voices, and settings differ. But they are still useful for quickly calibrating the category.

Public sample from the official Chatterbox demo.

This is a public demo output, not a normalized benchmark clip.

Source: Official Chatterbox HF demo

Public sample from the official Qwen3-TTS demo.

Sample generated from the public demo using voice-design mode, so this reflects synthesis quality more than strict cloning similarity.

Source: Official Qwen3-TTS HF demo

Public sample from the official KittenTTS demo.

Useful mainly as a size-and-speed reference point. It is more of a tiny local TTS model than a full ElevenLabs replacement.

Source: Official KittenTTS HF demo

I may add more samples here over time, especially once there are cleaner public examples for Voxtral, Fish S2 Pro, CosyVoice, and XTTS-v2 that are easy to compare without signing in or generating fresh audio.

Sources and signals

A few references that helped shape this view:

- free-voice-clone landscape repo: https://github.com/0xSojalSec/free-voice-clone

- Qwen3-TTS official repo: https://github.com/QwenLM/Qwen3-TTS

- Qwen3-TTS paper: https://arxiv.org/abs/2601.15621

- Fish Speech / Fish Audio S2 official repo: https://github.com/fishaudio/fish-speech

- Fish Audio S2 technical report: https://arxiv.org/abs/2603.08823

- Chatterbox official repo: https://github.com/resemble-ai/chatterbox

- Chatterbox model page: https://huggingface.co/ResembleAI/chatterbox

- CosyVoice official repo: https://github.com/FunAudioLLM/CosyVoice

- Kokoro official repo: https://github.com/hexgrad/kokoro

- Kokoro model page: https://huggingface.co/hexgrad/Kokoro-82M

- XTTS-v2 model page: https://huggingface.co/coqui/XTTS-v2

- GPT-SoVITS official repo: https://github.com/RVC-Boss/GPT-SoVITS

- KittenTTS official repo: https://github.com/KittenML/KittenTTS

- OmniVoice model page: https://huggingface.co/k2-fsa/OmniVoice

- OmniVoice official repo: https://github.com/k2-fsa/OmniVoice

- VoxCPM official repo: https://github.com/OpenBMB/VoxCPM

- VoxCPM2 model page: https://huggingface.co/openbmb/VoxCPM2

- Mistral Voxtral TTS docs: https://docs.mistral.ai/capabilities/audio/text_to_speech

- Mistral Voxtral TTS model page: https://huggingface.co/mistralai/Voxtral-4B-TTS-2603

- Microsoft VibeVoice repo: https://github.com/microsoft/VibeVoice

- Practitioner discussion on X around Chatterbox, Fish Speech, Qwen3-TTS, GPT-SoVITS, XTTS-v2, and Voxtral

- Reddit discussion on Mistral Voxtral TTS in r/LocalLLaMA: https://www.reddit.com/r/LocalLLaMA/comments/1s46ylj/mistral_ai_to_release_voxtral_tts_a/

- Reddit discussion on KittenTTS in r/LocalLLaMA: https://www.reddit.com/r/LocalLLaMA/comments/1mhyzp7/kitten_tts_sota_supertiny_tts_model_less_than_25/