The phrase “agent memory” sounds tidy.

It isn’t.

By 2026, the category has split into several very different ideas hiding behind the same label: raw conversational recall, profile memory, reflective memory, coding-agent memory, context operating systems, and enterprise context APIs. Some tools are really search systems with a better story. Some are profile builders with a vector store bolted on. Some are trying to become an actual cognitive substrate for agents.

That’s why this market feels confusing. Everyone says “memory.” They do not mean the same thing.

Most so-called memory systems are optimizing different failure modes: forgetting, retrieval drift, task-state loss, profile incoherence, or collaboration across agents.

The short version

If you strip away the branding, the current memory stack breaks down like this:

- MemPalace bets on storing raw material and making it navigable

- Mem0 bets on a universal memory API with extraction, entity linking, and hybrid retrieval

- Honcho bets on stateful social memory, representations, and continual learning around entities

- Hindsight bets on reflective memory, world models, experiences, and multi-path retrieval

- OpenViking treats memory as only one part of a larger context operating system

- ByteRover treats memory as a versioned context tree for coding agents and teams

- Supermemory is building an API-first context platform that blends profiles, connectors, extractors, and graph memory

And then there’s a second tier of names with much weaker signal right now.

- Holographic does not appear to have a clearly established agent-memory product footprint yet

- RetainDB was difficult to substantiate with strong public technical material during this research pass

That difference matters.

A good field guide should not pretend weakly documented projects are equal to mature ones just because they fit the headline.

What makes a good agent memory system?

Before comparing tools, it helps to ask the obvious question: what is “good” memory for an agent?

The answer is not “largest context window.” That’s the lazy version.

A serious memory layer should do 6 things well:

- Ingest selectively or intentionally

- raw logs, extracted facts, summarized state, files, tool traces, or all of the above

- Preserve update semantics

- old facts, changed facts, contradictions, temporal drift, and per-entity state transitions

- Retrieve with multiple signals

- semantic similarity alone is not enough

- Compress without destroying meaning

- summaries are useful until they sand off the reason something mattered

- Stay observable

- if retrieval fails, you need to know why

- Fit the real workload

- coding, support, research, personal assistants, and enterprise copilots do not need the same memory shape

That leads to the main split in the market.

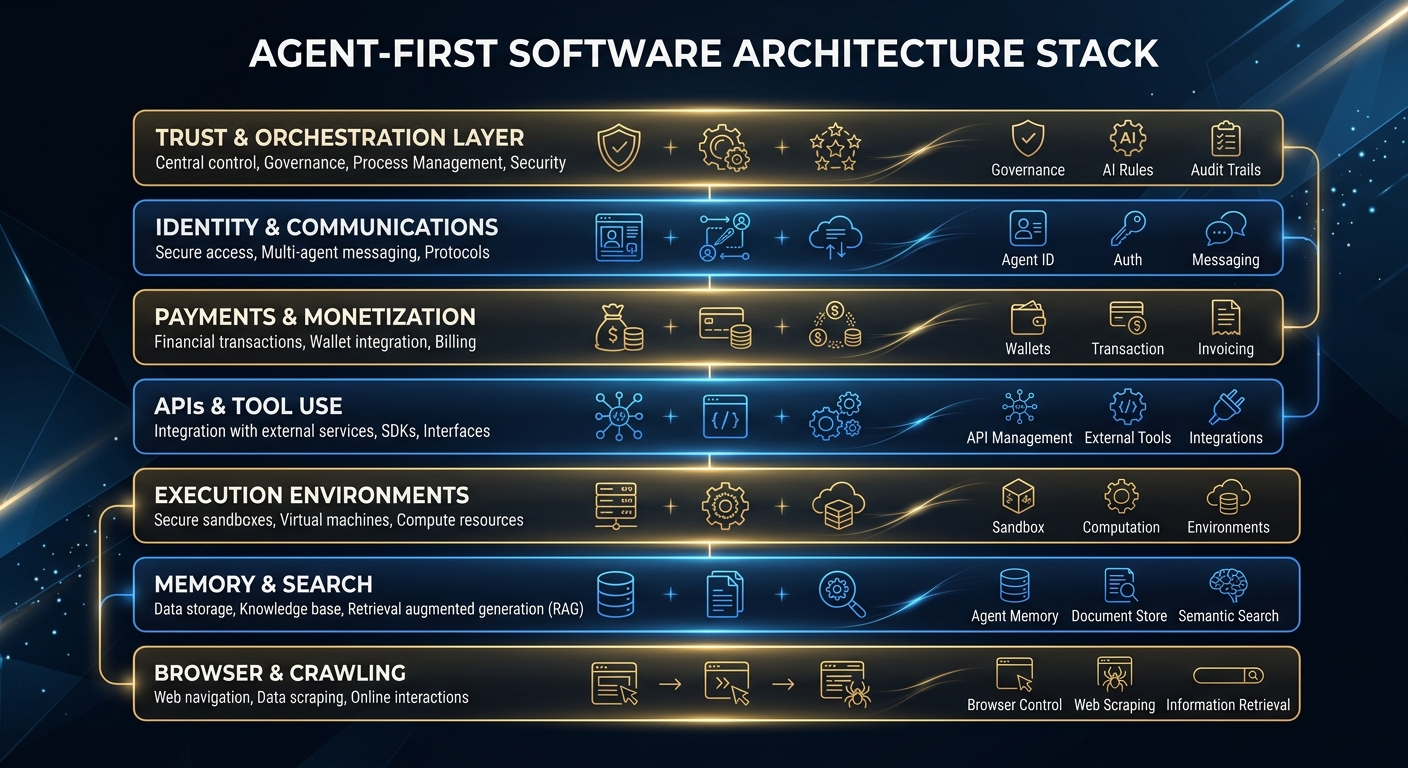

The 5 major design camps

1) Raw recall systems

These systems say: don’t let an LLM prematurely decide what matters. Store more, summarize less, and retrieve the right slices later.

This camp includes MemPalace most clearly.

2) Extracted profile memory systems

These systems convert conversations into structured, reusable memory objects: preferences, entities, facts, user traits, session state.

This is the center of gravity for Mem0, parts of Honcho, and a lot of commercial “memory layer” products.

3) Reflective / learning memory systems

These systems want more than recall. They want memory to support inference, reflection, self-improvement, and behavior change over time.

This is where Hindsight is most distinct.

4) Context operating systems

These systems say memory is only one part of the problem. The real job is managing memory, resources, skills, and retrieval trajectories in one coherent hierarchy.

That’s OpenViking.

5) Coding-agent memory systems

These systems optimize for codebases, projects, teams, and long-running engineering workflows. They care about persistent project knowledge more than personal biography.

That’s where ByteRover stands out.

Quick comparison table

| System | Icon | Core idea | Best fit | Main risk |

|---|---|---|---|---|

| MemPalace |  | Store raw conversations and project data, then search with structure | Personal/coding agents that need verbatim recall | Hype outran documentation in places, and some early claims needed correction |

| Mem0 |  | Universal memory layer with extraction + entity linking + hybrid retrieval | Broad app builders and agent products | Can collapse into “smart profile + search” if you need deeper task memory |

| Honcho | Stateful memory around entities, peers, sessions, and representations | Social agents, user modeling, adaptive assistants | More infrastructure-heavy than lightweight builders may want | |

| Hindsight |  | Biomimetic memory with retain / recall / reflect | Agents that need learning, reflection, and longer behavioral adaptation | More ambitious system, more moving parts |

| OpenViking |  | Filesystem-style context database for memory, resources, and skills | Complex agent stacks that need hierarchical context management | Broader surface area means steeper setup and more architecture decisions |

| ByteRover |  | Versioned context tree for coding agents | Engineering teams and coding agents | Purpose-built orientation can feel narrow outside dev workflows |

| Supermemory | API-first context engineering platform with profiles, graph, extractors, connectors | Enterprises, plugin ecosystems, multi-tool memory | More platform-y than deeply open technical substrate |

The icons above intentionally pull from project assets or official sites. That’s useful visually, but it’s also a reminder: some of these projects present themselves more cleanly than they document themselves.

1) MemPalace

GitHub: MemPalace/mempalaceMemPalace is one of the more interesting entrants because it pushes back on the now-standard “extract a few facts and call it memory” pattern.

Its thesis is blunt: most memory systems throw away too much context too early.

What it is actually doing

From the public README and benchmark notes, MemPalace centers on:

- raw verbatim storage in ChromaDB

- structural organization using a “palace” metaphor: wings, rooms, closets, drawers

- local-first operation

- search over conversations, projects, and generalized extracted categories

- optional AAAK compression as a separate layer, not the default storage mode

- MCP tooling for agent access

That basic idea is stronger than the marketing metaphor. The metaphor is decorative. The important part is verbatim preservation plus metadata structure.

The good

- Strong answer to the “summary drift” problem

- Local-first posture is appealing for private personal-agent workflows

- Honest correction cycle after the launch blowback, which I actually respect

- Better fit than many profile-memory products for “why did we decide this?” retrieval

The weak points

MemPalace also got caught overselling itself early.

Its own maintainers added a notable correction note after launch, clarifying that:

- the headline benchmark was from raw mode, not the compressed AAAK mode

- some token-count claims were off

- some framing around retrieval boosts and contradiction handling was too strong

That does not kill the project. But it does matter.

When a memory product is selling trust and recall, the standard for benchmark framing should be brutal.

Technical take

MemPalace is compelling when you want:

- raw conversational memory

- human-auditable retained text

- low-assumption storage

- a memory system that behaves more like a searchable archive than an opinionated summarizer

It is less compelling if you want:

- crisp mutation semantics

- strong per-entity canonicalization

- easy conflict resolution between old and new facts

- enterprise-managed APIs out of the box

My read: MemPalace is a strong corrective to over-compressed memory systems. It feels most useful for power users, personal agents, and coding/research contexts where exact wording still matters.

2) Mem0

GitHub: mem0ai/mem0 Mem0 DocsMem0 is probably the cleanest example of the memory layer as productized infrastructure.

It gives builders something immediately legible: an API, SDKs, hosted options, self-hosted options, extraction, search, and integrations.

What it is actually doing

Based on the current docs and README, Mem0’s newer stack emphasizes:

- add-only extraction

- user, session, and agent-level memory scopes

- entity extraction and linking

- hybrid retrieval across semantic, keyword, and entity signals

- open benchmark framing around LongMemEval / LoCoMo / BEAM

- both managed and open-source deployment paths

This is not trying to be poetic. It is trying to be a practical universal substrate.

The good

- Clean developer experience

- Good balance of self-hosted and managed options

- Explicit support for broad agent ecosystems

- Better than average at turning “memory” into something application teams can wire up quickly

- Entity linking matters more than most memory products admit

The weak points

Mem0 is strongest when the memory you want is extractable and reusable.

That means it shines on:

- preferences

- durable facts

- user profiles

- stable recurring constraints

- lightweight app personalization

It is weaker when the hard problem is:

- multi-step task state

- nuanced decision rationale

- preserving exact discussion context

- debugging retrieval failures inside a long-running agent system

Technical take

Mem0 sits in the sweet spot between “toy memory” and “cognitive operating system.”

That’s exactly why it’s popular.

It may not be the deepest answer to agent memory, but it is one of the most composable and product-ready ones.

My read: Mem0 is the safe default pick for builders who need memory now, especially if the main goal is personalization and durable factual context rather than agent introspection.

3) Honcho

GitHub: plastic-labs/honcho Honcho DocsHoncho is one of the more distinct systems in this group because it is explicitly organized around entities and relationships in ongoing interaction spaces.

Its primitives are not just “memories.” They are:

- workspaces

- peers

- sessions

- messages

- representations

- chat/search/context views over those entities

That architecture says a lot. Honcho is less interested in “retrieve 3 facts from a vector DB” and more interested in maintaining evolving state around people, agents, and groups.

What it is actually doing

From the public repo and docs:

- FastAPI service core

- Postgres + pgvector

- background “deriver” workers

- session history plus representations and summaries

- natural-language querying over peer state

- managed cloud option plus self-hosting path

This feels much closer to an operational memory backend than a single-purpose vector recall tool.

The good

- Good conceptual model for multi-entity systems

- Stronger than most on evolving state and social memory

- Better fit for assistants that need to maintain representations of users, collaborators, or persistent roles

- More explicit memory abstractions than “just store snippets and pray”

The weak points

- More infra than small hobby builds need

- Less lightweight than plug-and-play libraries

- Heavier dependence on service architecture and background workers

- Some of the sales language gets a little dramatic around “data moats” and retention outcomes

Technical take

Honcho looks strongest when the problem is relationship-aware continuity.

Think:

- adaptive tutoring

- recurring customer support agents

- persistent B2B assistants

- agents that need stable models of multiple participants over time

That is different from code memory, and different from raw conversational recall.

My read: Honcho is one of the better options if your agent needs to understand who is involved and how they evolve, not just what was said.

4) OpenViking

GitHub: volcengine/OpenViking OpenViking websiteOpenViking is not really “just another memory product.”

It is trying to make context the primary unit of infrastructure.

That’s a bigger and more ambitious claim.

What it is actually doing

OpenViking describes itself as a context database with a filesystem paradigm. The important pieces are:

- unified handling of memory, resources, and skills

- hierarchical directory-style organization

- tiered loading, often described as L0 / L1 / L2

- recursive retrieval via path plus semantic search

- visualization of retrieval trajectories

- automatic session management and self-iteration

This is one of the rare systems explicitly attacking the observability problem in agent context systems.

That matters a lot.

One of the biggest failures in agent memory today is not just retrieval quality. It’s that when retrieval goes bad, the system gives you almost no useful explanation.

OpenViking at least points in the right direction.

The good

- Strong framing around unified context management

- Better than most on context observability and structured retrieval paths

- Hierarchical organization feels closer to how humans manage real work

- A serious answer to the “RAG is a flat junk drawer” problem

The weak points

- Bigger mental model to learn

- Broader system means broader setup surface

- Can feel like infrastructure before product

- The filesystem metaphor is powerful, but only if the team using it is disciplined

Technical take

OpenViking is probably most interesting to people who already believe that context engineering is the real work now.

If you think memory is just “save some facts,” it may feel overbuilt.

If you’ve already hit the failure modes of flat RAG, opaque retrieval, and transcript sludge, it starts to look a lot more sane.

My read: OpenViking is one of the most important architectural projects in the category because it widens the frame. It treats memory as part of a full context substrate, which is probably where serious agents are headed anyway.

5) Hindsight

GitHub: vectorize-io/hindsight Hindsight DocsHindsight is aiming at something closer to memory that supports learning, not just retrieval.

That sounds fluffy until you look at the structure.

What it is actually doing

Hindsight organizes memory around:

- world facts

- experiences

- mental models

- retain / recall / reflect operations

- combined entity, relationship, temporal, sparse, and dense representations

- reciprocal rank fusion plus reranking

This is one of the more explicit attempts to build a memory pipeline with reflective synthesis rather than a memory cache with better search.

The good

- Clear distinction between raw experience and higher-order understanding

- Better than average story for autonomous agents that need to adapt

- Multi-path retrieval strategy is closer to how real memory systems should work

- Reflection as a first-class operation is genuinely useful

The weak points

- More complex system, therefore more tuning surface

- Strong benchmark claims always need scrutiny in this category

- For simpler assistants, this may be more machinery than necessary

Technical take

Hindsight looks best when the agent needs to:

- learn patterns from experience

- form durable higher-level models

- support more agentic decision-making over time

- handle temporal and causal retrieval, not just nearest-neighbor recall

This is not the right tool for everyone.

It may be the right tool when the goal is to make an agent change how it behaves as it accumulates experience.

My read: Hindsight is one of the more intellectually serious projects here. If it keeps execution quality high, it could end up mattering a lot more than its current footprint suggests.

6) ByteRover

GitHub: campfirein/byterover-cli ByteRover DocsByteRover is memory built from the perspective of coding agents, not general assistants.

That focus gives it a very different shape.

What it is actually doing

From the current CLI docs and README:

- project-scoped context tree

- curate / query / review flows

- version-control semantics for knowledge

- branch, commit, merge, push/pull for context itself

- cloud sync for teams

- worktree/source concepts for linked projects

- MCP support and integration across many coding-agent tools

This is smart.

Most coding-agent memory pain is not “remember my coffee order.” It’s:

- remember architectural decisions

- remember conventions in this repo

- remember which module owns what

- preserve reviewed knowledge across sessions and across agents

- keep bad or unreviewed memory from poisoning future work

ByteRover is one of the few products attacking that directly.

The good

- Strong workload fit for engineering teams

- Version-control model for knowledge is a big idea

- Review workflow is a real guardrail against garbage memory accumulation

- Better shared-memory story than most personal-agent memory layers

The weak points

- Less general outside coding and project knowledge

- The context-tree abstraction works best when the team actually curates it

- Cloud/product surface may be overkill for solo users who just want a tiny plugin

Technical take

ByteRover’s best insight is that agent memory should sometimes behave more like source control than like chat history.

That’s especially true in software.

Unreviewed memory is basically unreviewed code. It spreads bugs.

My read: ByteRover is one of the most practically grounded systems in this whole category because it understands the real operational pain of coding agents.

7) Supermemory

Supermemory Supermemory DocsSupermemory looks like a context platform trying to cover the full developer and enterprise memory stack in one API surface.

Its public positioning combines:

- user profiles

- memory graph

- retrieval

- extractors

- connectors

- plugins across coding tools and AI surfaces

What it is actually doing

From its public llms.txt and site materials, Supermemory is framing itself as a context engineering platform more than a narrow memory API.

That means:

- memory ingestion from multiple content types

- graph-oriented updates and contradictions

- connector-driven sync from external systems

- personal memory app plus enterprise API

- plugins across tools like Claude Code, Cursor, OpenClaw, and OpenCode

The good

- Broad product surface

- Strong awareness that memory needs connectors and extraction, not just storage

- Good fit for cross-tool users and API teams

- Clear recognition that profile memory and document memory need to coexist

The weak points

- More black-box platform feel than some open-source-first builders will like

- Public materials are polished, but the deepest technical guts are less inspectable than the strongest open-source alternatives

- The broadness is attractive, but it also makes it harder to tell where the moat really is

Technical take

Supermemory is interesting because it’s not pretending “agent memory” is one tiny API call. It is packaging the entire context ingestion and retrieval stack.

That is commercially smart.

Whether it becomes technically dominant depends on how well the graph/update layer holds up in real enterprise workloads.

My read: Supermemory is a serious platform play. It’s likely strongest for teams who want one vendor-shaped surface for context rather than assembling 6 components by hand.

What about Holographic and RetainDB?

This is where the research got thin.

I found much weaker public technical signal for these two than for the systems above.

Holographic

I was not able to substantiate a clearly established, actively documented agent-memory framework with the same confidence as the others in this post.

There are references to “holographic memory” as an idea, and there are adjacent experimental or educational artifacts, but the public signal for a strong, current, production-grade framework was weak in this pass.

RetainDB

Likewise, I did not find strong public technical material that made RetainDB legible enough to treat as a first-tier memory framework alongside Mem0, Honcho, OpenViking, Hindsight, ByteRover, or Supermemory.

That does not mean these projects are fake. It means the evidence quality was not high enough for me to pretend certainty.

That honesty is more useful than fake coverage.

Important additions beyond the original list

A few adjacent systems matter enough that they deserve mention even though they weren’t on the original shortlist.

Zep

Zep remains one of the more established memory backends in the broader market, especially around conversational memory and graph-backed user context. It’s worth tracking because it helped legitimize memory as standalone infrastructure before the current boom.

Letta / MemGPT lineage

The Letta / MemGPT line matters conceptually because it pushed hard on the distinction between working memory and archival memory. Even when newer systems don’t inherit that exact architecture, they are still reacting to that same core insight.

LangGraph / framework-native memory patterns

A lot of agent builders will not buy a standalone memory product at all. They will implement memory patterns inside orchestration stacks like LangGraph or custom toolchains. That means the real competitor to many memory products is not another vendor. It’s internal architecture.

Pros and cons by archetype

Best for raw recall

- Winner: MemPalace

- Why: strongest bias toward preserving original material instead of over-extracting it

Best for app-builder adoption

- Winner: Mem0

- Why: easiest balance of SDK ergonomics, hosted options, and useful defaults

Best for social/entity memory

- Winner: Honcho

- Why: peers, sessions, representations, evolving state

Best for reflective learning

- Winner: Hindsight

- Why: retain / recall / reflect is a stronger cognitive model than plain retrieval

Best for context architecture

- Winner: OpenViking

- Why: treats memory as part of a broader, hierarchical context system

Best for coding teams

- Winner: ByteRover

- Why: versioned context, reviewable knowledge, project-first orientation

Best for platform/API breadth

- Winner: Supermemory

- Why: broad context platform with connectors, plugins, profiles, and enterprise posture

Where the market is converging

Once you read enough of the repo docs, benchmark claims, product pages, Reddit threads, and X discourse, the same pattern keeps showing up.

The market is slowly splitting into 3 camps.

1) Memory as retrieval backend

This camp treats memory as a better recall layer:

- extract facts

- index them

- retrieve them later

- fuse semantic, keyword, graph, or entity signals

That’s where Mem0 and parts of Supermemory fit most naturally.

It’s a useful model because it’s easy to ship. The weakness is that retrieval backends can become very good at remembering facts while still being mediocre at representing changing state, decision rationale, or multi-agent context.

2) Memory as cumulative context substrate

This camp thinks the whole “memory layer” framing is too small.

The real goal is a persistent, structured context environment the agent can keep reading and updating over time. That’s much closer to the direction of OpenViking, and philosophically adjacent to a lot of file-native or context-native systems.

This approach tends to win when the problem is not just forgetting facts, but managing the sprawl of memory, resources, and skills over long-running work.

3) Memory as behavioral learning system

This camp cares less about recall and more about adaptation.

That’s where Hindsight feels most distinct. It wants memory to support learning from experience, reflection, and synthesis, not just retrieval.

That direction matters because real agents do not just need to answer questions about the past. They need to change how they act based on what happened before.

What the discourse reveals without turning this into gossip

The public conversation around these systems is still messy, but it reveals a few useful truths.

Benchmark claims now get challenged fast

That’s healthy.

Memory products used to get away with vague “smarter over time” claims. That window is closing. If a project publishes a big benchmark number now, the community immediately asks:

- what mode was used?

- was it self-reported?

- what was the token budget?

- what got compressed or skipped?

- is the eval harness public?

That pressure is good for everyone.

Coding-agent memory is becoming its own category

This is one of the biggest changes since the first wave of agent tooling.

A coding agent does not need the same memory shape as a personal assistant. It needs:

- repo conventions

- architectural decisions

- durable project state

- repeatable fixes

- shareable context across collaborators and machines

That’s why products like ByteRover feel more specific and more grounded than generic memory layers when the workload is software development.

“Just use a vector DB” is no longer persuasive

A year ago, many agent-memory products were basically packaging for vector search.

That’s not enough anymore.

The stronger systems now talk explicitly about:

- entity linking

- temporal change

- conflict preservation

- reflection

- retrieval fusion

- context loading policy

- observability

That’s a real upgrade in sophistication.

Naming and positioning matter more than they should

This sounds superficial, but it is real.

A tool like Hindsight has a discoverability problem because the name is so common. A tool like Mem0 benefits from being short and clean, but also generic enough that it must keep explaining itself through product design. OpenViking sounds infrastructural, which fits the product. ByteRover sounds more like a dev tool than a memory paper, which also fits the product.

In this category, discoverability is part of distribution, and distribution matters because memory systems need ecosystems around them.

A better way to think about the winners

There probably will not be one memory winner.

The more plausible outcome is a stack of different winners by workload:

- personal assistants and durable preferences: Mem0, Honcho, Supermemory

- verbatim recall and personal archive memory: MemPalace

- context operating systems for more ambitious agent stacks: OpenViking

- reflective / learning-oriented agents: Hindsight

- coding agents and engineering teams: ByteRover

That’s a much more believable market structure than one universal “best memory layer.”

Final take

The important shift is not that we suddenly have “memory solved.”

We don’t.

The important shift is that agent memory has become a real systems layer, and the projects in this category are finally specializing around different failure modes instead of all pretending to do the same thing.

If I had to reduce the landscape to one sentence per product:

- MemPalace preserves more of reality

- Mem0 productizes memory for builders

- Honcho models evolving entities

- Hindsight tries to make agents learn

- OpenViking reframes memory as context architecture

- ByteRover makes memory behave like engineering infrastructure

- Supermemory sells the full context platform layer

That’s a healthier market than we had a year ago.

Still messy. Still over-claimed in places. But healthier.

And if you’re building agents in 2026, this is the real lesson: the hard part is no longer just choosing a model. The hard part is deciding what the agent should remember, how it should update that memory, and how you keep retrieval from quietly lying to the system.